Soft Tracking Using Contacts for Cluttered Objects (STUCCO)

Links ¶

Abstract ¶

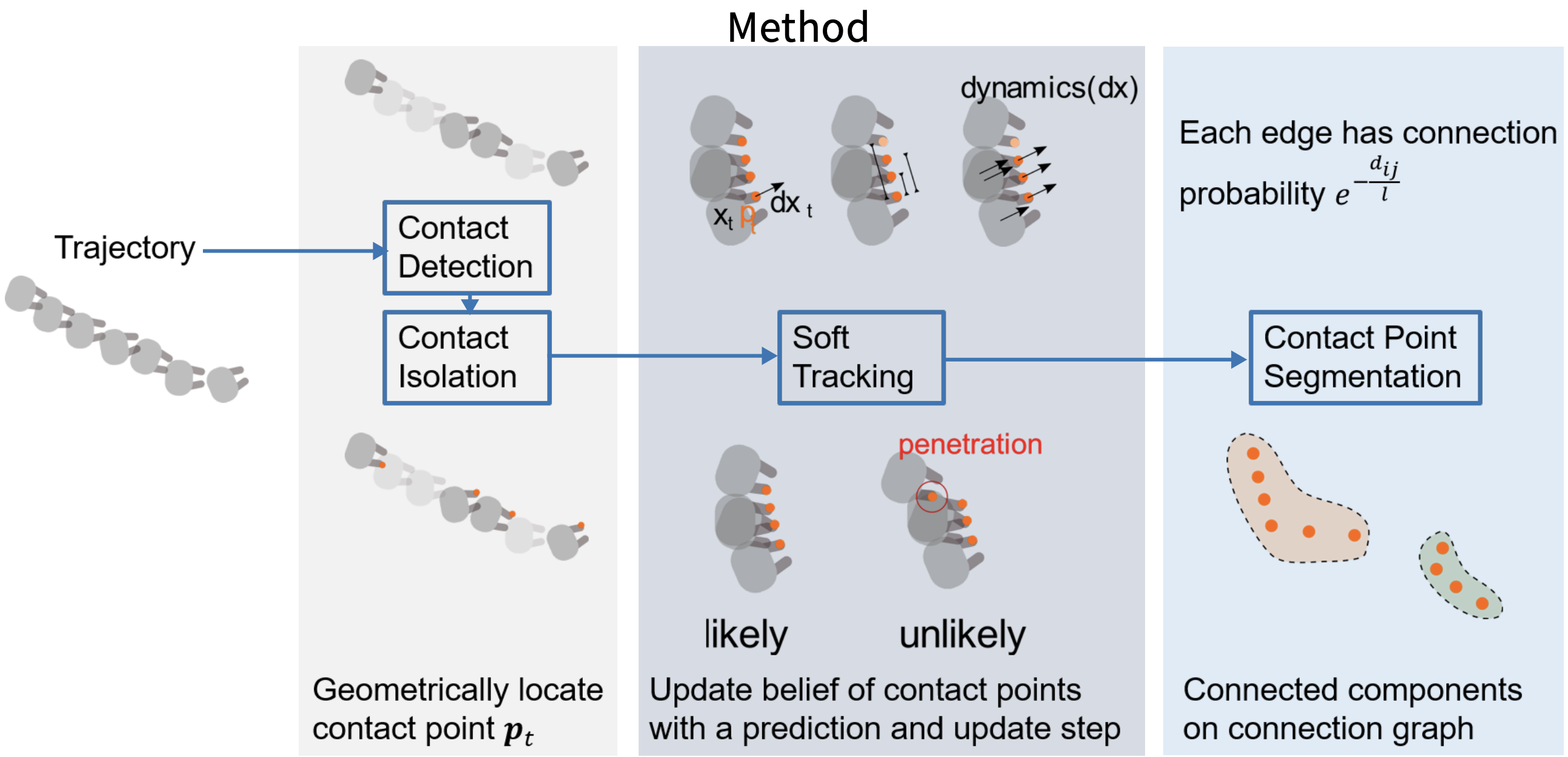

Retrieving an object from cluttered spaces such as cupboards, refrigerators, or bins requires tracking objects with limited or no visual sensing. In these scenarios, contact feedback is necessary to estimate the pose of the objects, yet the objects are movable while their shapes and number may be unknown, making the association of contacts with objects extremely difficult. While previous work has focused on multi-target tracking, the assumptions therein prohibit using prior methods given only the contact-sensing modality. Instead, this paper proposes the method Soft Tracking Using Contacts for Cluttered Objects (STUCCO) that tracks the belief over contact point locations and implicit object associations using a particle filter. This method allows ambiguous object associations of past contacts to be revised as new information becomes available. We apply STUCCO to the Blind Object Retrieval problem, where a target object of known shape but unknown pose must be retrieved from clutter. Our results suggest that our method outperforms baselines in four simulation environments, and on a real robot, where contact sensing is noisy. In simulation, we achieve grasp success of at least 65% on all environments while no baselines achieve over 5%.

Highlights ¶

- first paper to track multiple objects using only contact feedback

- resolves ambiguity in data association far in the past with new information

- outperforms baselines in tracking metrics of contact error and segmentation quality using the Fowlkes-Mallows index

Higher FMI is better while lower contact error is better. Error bars indicate 20-80th percentile. Ours is in blue.

- able to segment contacts points into objects and track the target object's pose sufficiently to grasp in simulated and real environments

Bibtex ¶

@ARTICLE{9696372,

author={Zhong, Sheng and Fazeli, Nima and Berenson, Dmitry},

journal={IEEE Robotics and Automation Letters},

title={Soft Tracking Using Contacts for Cluttered Objects to Perform Blind Object Retrieval},

year={2022},

volume={7},

number={2},

pages={3507-3514},

doi={10.1109/LRA.2022.3146915}}